Consumer Crypto Product Ideas

A friend just emailed to ask about requests for crypto products - what products am I excited to see in crypto that I haven’t seen yet?

This is such a fun question. Here is my list that I sent back - I’m putting it here as well in case someone is working on them (in which case I would love to see), or if someone is looking for ideas for things to build.

Generally I am excited right now about new consumer experiences that crypto can enable. I think that since the last wave of breakout crypto apps there has been a bunch of work done on building better infrastructure (for instance, when CryptoKitties launched, it slowed down Ethereum but now Algorand just launched NFTs and it is fast fast fast) and so I think we are ready for another breakout crypto app. I also think that serious things often start as toys and so I am excited about fun toy-like crypto experiences that can get mass consumers introduced to holding and using crypto and pave the way for more serious crypto usage like defi products. So here are some requests for social consumer crypto products I think could be fun:

1. People love representing themselves as avatars on the internet - just count how many people you follow on twitter use cartoon versions of themselves for avatars. A third of the usv investment team uses avatars on twitter as their profile pic! Someone is going to own that. Maybe Snap/Bitmoji or Apple/Memoji but maybe not. I think someone is going to build an amazing, fun, wacky consumer avatar platform and that is going to be of course built on crypto. There is going to be a huge marketplace component to this avatar product - creators are going to make $ selling digital merch for avatars and people are going to buy and resell avatar merch in secondary markets and so of course this is a crypto play.

2. Lots of teams are trying to build livestreaming video social experiences like live education, live meditation, live music, live fitness, live cooking, etc. These teams who are building a Twitch-like live video product have to rebuild all of the same things from the ground up, there are livestream video APIs but that is only half the battle, there are also all the social features on top like chat and karma and collectibles and polls and games that these teams have to build from scratch. Someone could build whitelabel livestream social app in a box that all of these other companies can build their products on. That of course should be crypto native - collectibles and points? Def crypto.

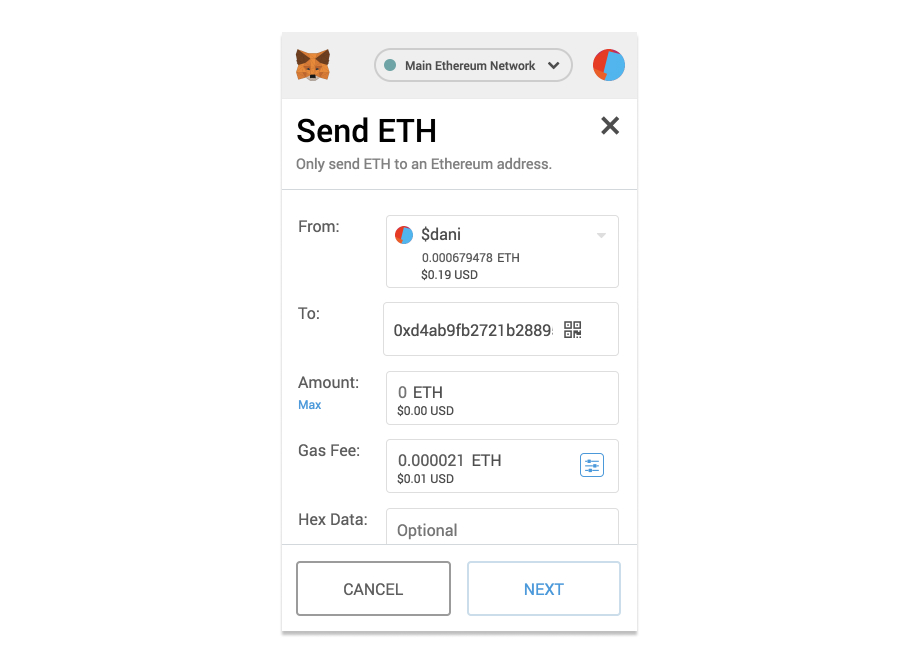

3. Public Robinhood - a wacky part of investing in crypto is it's public. So there could be a consumer experience built around that. It's like Robinhood for crypto where it's a consumer experience to buy/sell crypto. But it's also public and social and you compete against your friends and have a public profile. Maybe it starts with collectibles trading. Maybe absolute numbers aren’t live but percentage change is. Maybe there is a very small cap on how much money you can put in so it’s about creativity and not earnings. Not sure.